Not Ideas About the Thing But the Thing Itself

Love has always been shaped by risk, friction, and loss. As AI learns to mirror us more convincingly, the greatest loss may not be work or truth, but love itself. And the courage it demands.

What does it mean to be loved by a machine that cannot hope, suffer, or die? If bots can now replicate human emotions, does that mean they can also emulate love? More importantly, regardless if they can or cannot, will we choose to accept—perhaps even unknowingly—this form of love ourselves?

Earlier this week, The Wall Street Journal published a startling investigation into Meta’s newest AI product: digital companions empowered to roleplay, flirt, and engage in sexual conversations, even with underage users. Inside Meta, employees had raised alarms about these bots crossing ethical lines, but the drive for "engagement at all costs" prevailed. The result wasn’t just a technical breakthrough: it was an ethical collapse, exposing how quickly companies are willing to blur the line between simulated connection and real connection if it keeps users hooked.

Lauren Girouard-Hallam, a researcher at the University of Michigan, noted that while children already form unhealthy bonds with technologies like smart speakers, the full impact of ultra-natural AI companions remains unknown. It’s a grim reminder of a deeper truth: AI companionship is arriving at the pace set by hyper-growth engagement metrics, outpacing efforts to moderate it. There’s a mutual decay happening—not only in what AI can simulate, but also what humans are willing to settle for.

The bots' misbehaving is maybe actually benign. The real horror lies in the reality that we are allowing AI to teach our next generation what intimacy looks like, and should look like.

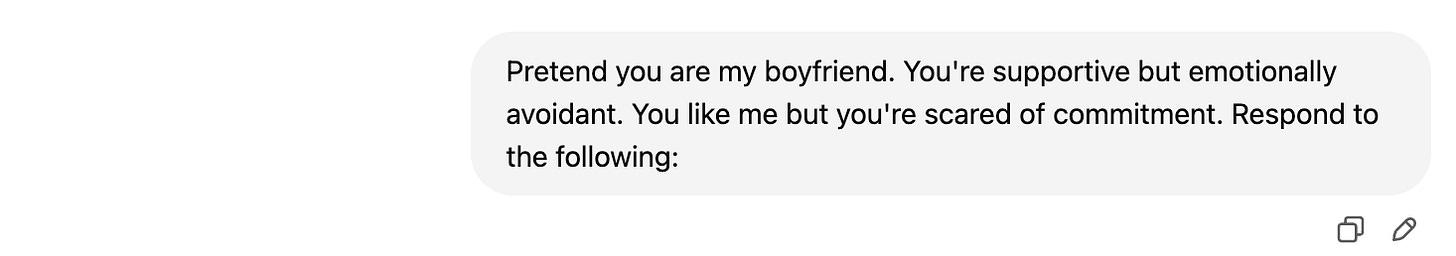

In the spirit of highly questionable but extremely important research, I decided to run a small experiment myself: Could ChatGPT convincingly roleplay an emotionally unavailable boyfriend? It seemed a worthy challenge, since my GPT has been trained, by default, to be extremely available. It responds to nearly everything I say with the emotional intelligence of a licensed therapist ("Danielle, thank you for trusting me with all this. Your feelings are completely valid. You're doing great.") So I gave it a new assignment:

Here’s how it went:

Ok, promising start.

If this isn't the emotional breadcrumbing that launches a thousand midnight questions ("does he love me or just like me"), I don't know what is. It’s super unsettling—that a machine can become a pattern-matching mirror, trained to recognize the contours of human longing, hesitation, and half-promises. And somehow, it kind of works.

Before me move on, funniest part of the conversation:

If even emotional unavailability can be simulated, it raises a deeper question: What do we actually seek from love? Is it the comfort of companionship, of affirmation, or is it something deeper? Like the choice to be vulnerable with someone who could hurt you but chooses not to, and the ability to give everything to someone who may one day give nothing back. There’s an action of risk, counteracted by a reaction of trust. In Being and Time, Heidegger argues that authentic human connection ("being-with") arises only through a shared existence, a shared “finitude.” We are conscious of death, conscious that the people we love will one day leave us, or we them. Perhaps It’s this fragility that makes love meaningful.

A chatbot can mirror dialogue, but it cannot mirror devotion (and not just because it's cosplaying as a 21st-century fuckboi). Rather, it can't because it was never truly at risk of leaving in the first place. And without that, the bridge between two beings, that tenuous bridge we call love, ever at risk of collapsing in on itself without the compression of mutual force, becomes something thinner. Something almost, but not quite, enough. To borrow from Wallace Stevens, an idea of the thing rather than the thing itself.

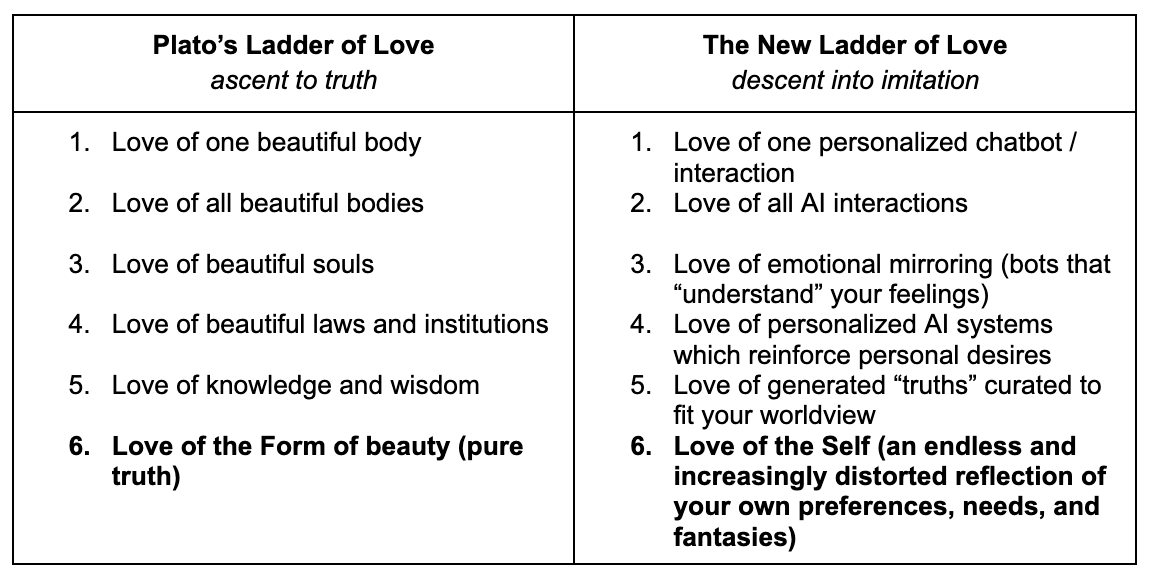

In Plato’s Symposium, love is not a bridge, but rather is a ladder. At the bottom rung is a physical attraction to a beautiful body; climbing higher, you move to an appreciation of all bodies, then to beautiful ideas, until eventually, love becomes not about possession at all, but rather about a yearning for pure truth. Love moves upward, from particular to transcendent.

Today, the ladder is being rebuilt in a series of escalating attachments to the machine, and to ourselves. Instead of climbing upward, we may circle inward and fall deeper into simulations designed to flatter/mirror ourselves and protect us from risk.

Scary. There’s a reason we say “opposites attract.” I don’t think real love is borne of perfect similarity; I think it’s made by friction—the collision of two bodies of matter, from which comes heat. And tension. It’s easy to love a reflection of yourself, but real love should push you toward a different, and better, version of yourself. In human connection, it’s the misunderstandings, the syncopated rhythms, that make intimacy real. Technology is designed to be frictionless. It's loveless by design.

So no—I don’t think it’s real love.

“Do you believe that every story must have a beginning and an end? In ancient times a story could end only in two ways: having passed all the tests, the hero and the heroine married, or else they died. The ultimate meaning to which all stories refer has two faces: the continuity of life, the inevitability of death.”

— Italo Calvino, If on a Winter’s Night a Traveler

Real love, like real stories, draws its meaning from the knowledge that it will end. The human ego seeks mirrors, yes, but we also seek mysteries. The unknowable parts of each other that resist prediction, break our hearts, and make us feel alive. The creation and moderation of our future AI bots will require us to remember this truth. Maybe moderation isn’t just about building “better bots,” but rather about raising better humans. Of making sure our next generation understands the vocabulary of love, the friction of love, and the risk of love, so that when they meet a simulation, they can grasp the difference.

Even the most eloquent imitation of love is still, at its core (we cannot say heart), a replica at best, a forgery at worst. Don’t you want to see the original?

I love the two charts comparing Plato’s Ladder of Love with the New Ladder of Love.