The Permanent Underclass Has Good GMAT Scores

On credentials, Claude Code, and going swimming anyway

I am spending my final months at Stanford taking leisurely bike rides to the pool, talking to my OpenClaw agent about nothing in particular, taking mental snapshots of the last light turning the sky behind Hoover Tower a beautiful azure, and then rage-deleting all of my agents (are agents the new tabs?) and spending my Saturdays not using anything AI at all. Something artificial and something whimsical.

Last week I had an admittedly wild conversation with my partner, who, for the record, is more AI-pilled than I am. I had a dream of connecting all of my favorite apps—GCal, Granola, Gmail, Notes—into one priority list and dashboard to rule them all. He looked at me as if I had built an elaborate Rube Goldberg contraption with the sole purpose of serving myself a single peanut.

“What’s the problem?” he asked.

“I can’t remember all the things I need to do.”

“So write them down.”

“That’s too much work.”

“Isn’t that,” he asked, “the one kind of work you shouldn’t leave up to AI??”

I built the tool anyway. This is, I’ve come to understand, a very GSB thing to do.

At risk of projecting, the specific texture of our current moment at Stanford is dread wearing enthusiasm’s clothes. There is the usual—relatively justified, absolutely out of touch—job anxiety that perennially plagues the class of people who have worked their asses off all their lives to score above the 97th percentile. There is also the usual “new thing” enthusiasm, now channelled into vibe-coding workshops with overflow rooms, AI speakers every week, and experiential classes that credentialize your harassment of 100 strangers to “build” your next big stealth idea. But this enthusiasm only thinly masks deeper anxieties: of being left behind, of obsolescence, of destitution.

Will the category of job you trained for not exist by the time you graduate, leaving you stuck in what the internet has taken to calling “the permanent underclass”—capitalism’s version of hell? Be wary of excess enthusiasm: does it come from love, or might it come from fear—the ingratiating behavior of a people terrified of their incoming ruler?

At dinner last week, the question (which, at this point, surfaces every day) came up again: “Do you know what you’re doing after school?” One of my closest friends—charming and brilliant, who operated at three startups in four years before she came here—looked up and said, somewhat to herself, “What job can I even do?”

This is a story about people who have felt wanted and needed, by society if not by someone, for possibly their whole lives, now at one of the most well-resourced institutions in the world, where the opportunity cost of not starting a company is higher than that of starting one.

This is a story about people who were born or fought their way into the elite and want, very much, to stay there.

The implicit contract was that judgment alone—hard skills aside—has been, and will continue to be, the ticket in. Is that contract reaching the end of its enforceability?

Two weeks ago, a piece of financial fiction—a Substack memo written from June 2028, explicitly labeled a scenario, not a prediction—sparked a software sell-off, manifesting a speculative future just by speaking it into existence. My friend texted the group chat: “rn I’m in an AI doom spiral tho.” The reassurance came immediately— “you’re better equipped than 99% of the world!!”—and dissolved just as fast, not knowing which side of the 99% bifurcation we will actually land on. The New Yorker recently named this fear: that AI automation will upend the labor market and produce a new permanent underclass, a rebooted Marxist lumpenproletariat with nowhere to climb and no recourse.

What is dissonant about this particular moment, at this particular school, is that the people who have historically seen themselves above the bifurcation are not quite behaving like they believe it. In the same breath, a private equity guy will sign his return offer and wonder if the vertical SaaS companies his firm has been double, tripling down on for the last decade are going to get annihilated, wiped out by AI like the rest of us. He’ll sign because, up until now, everything has been okay: people said knowledge work would be automated, and here we still are. The dread is as present as the counterevidence, which makes it so hard to act on.

The career advice that’s been dispensed at conferences this year has converged on a single instruction. Sanjay Nadhavajhala from AI finance company F2 said, “I think analysts are going to operate more like VPs, and VPs are going to operate more like MDs. I think everyone levels up.”

“So what happens to the MDs?” the moderator asked.

“That’s the big question.”

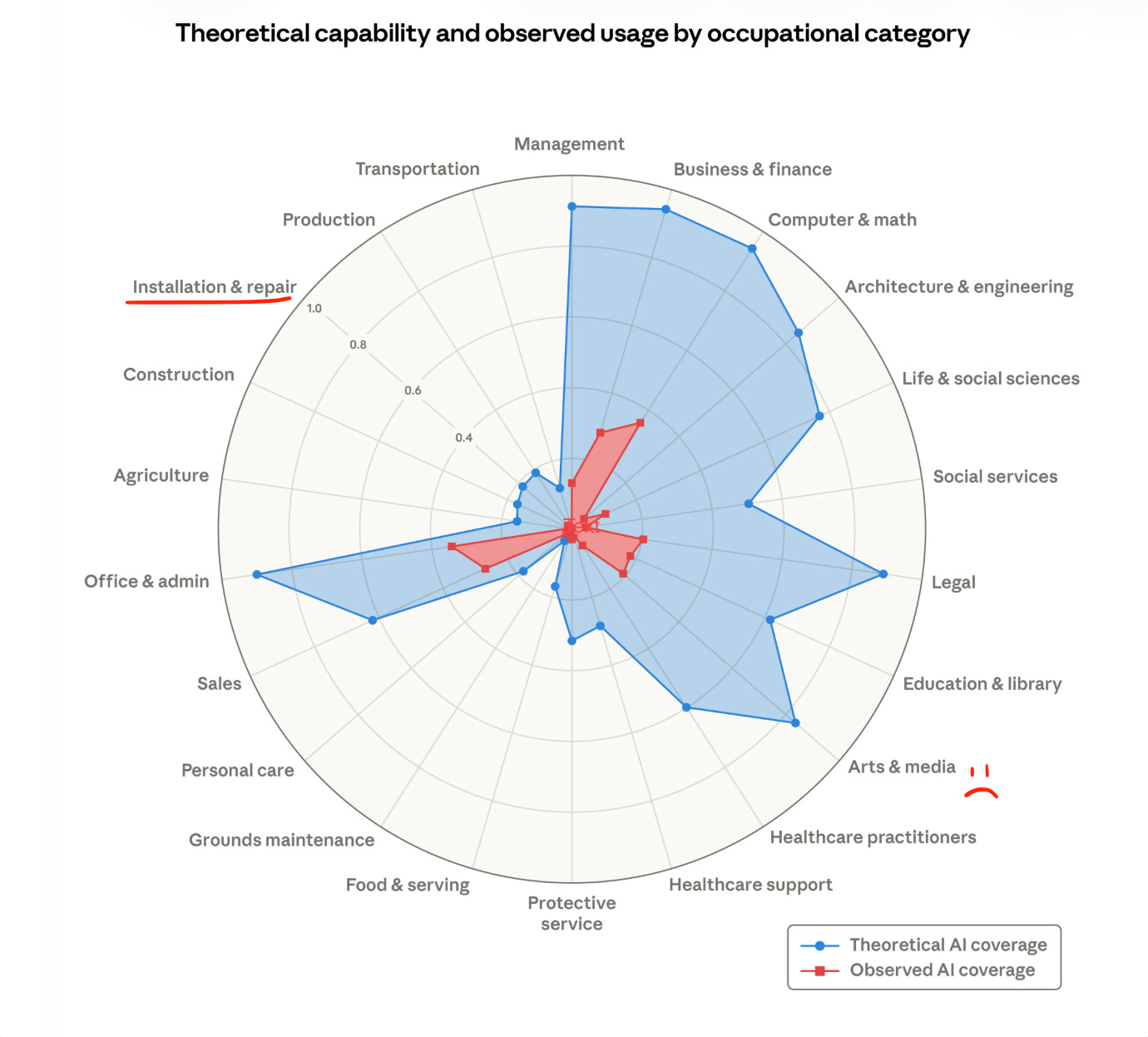

Another panelist said to find the fastest-moving water with the highest talent density (what does that even mean in plain English? Is the talent salmon?). A nonetheless reasonable bet in uncertain waters. These are not bad instructions, but they are execution instructions. We are told to “build something” to prepare for non-technical role interviews, we nod, and then we go home and open Claude Code to “allow” “allow” “allow” our way into building something we’re not sure we need. Ask me on a given day and I’ll tell you that, like half our class, I, too, am recruiting to plumb the toilets at Anthropic, at least angling for physical adjacency. (Their own labor market research suggests this instinct is not entirely irrational.)

I don’t know if living inside the AI bubble makes us better at knowing when not to use it or just more comfortable on crutches. The MIT researchers studying cognitive debt would say the latter, that outsourcing erodes capacity for discernment, the same way Botox renders a muscle inert. My foray into LinkedIn experimentation would say the former—posts I write without AI perform better than the ones I write with it, likely because no prompt I’ve written has mastered the inscrutable design of my intrusive thoughts.

I also don’t know what the end state of continuous bifurcation is. The consulting layoffs will come, in my opinion—for the research, the slide work, the framework production—because AI can approximate the output cheaply enough that the premium disappears. And then the person who walks into the room, or the implementation team, that’s valuable now—or is it? Because that layer gets thinner too, as AI gets better at cleaning messy GTM data and pattern-matching across implementations. Enterprise salespeople exist because trust and negotiation are structural necessities, but for how long, and at what scale?

All this to ask: what do I actually have that increases in value over time?

“Build something” isn’t a terrible place to start, so long as it’s not conflated with “build anything.” Our classmate, Celeste Bean, who runs AI workshops at GSB, has a framework for determining whether or not a product is worth building:

Will you need this output next week?

Do other people use it with their own data?

Should it run automatically?

Does it need to connect to other apps?

Do you have a specific picture of how it should work?

The more yes answers, the more the build is justified. The framework forces the questions: what’s the actual problem we’re trying to solve, and does our solution replicate or innovate on previous ones? The framework can’t tell you that. Only you can tell you that. Celeste is co-founding an AI consultancy to help execs figure out what AI means for them. The business model is, essentially, being the person who asks these questions.

There’s also a prior question. Before you open Claude Code, before you plumb, ask yourself:

When do I reach flow state?

What have I avoided, that I love, because I think it’s “silly” or “beneath me”?

These are questions worth sitting with before you build anything, 1) because AI will lower the barrier to whatever you build next, and 2) what makes you you is what AI can never replicate. But only you can decide if it’s worth building to you. We wrote about learning how to listen to that answer.

And then go swimming once in a while. No, not in high-density, fast-moving waters. Take one day away, sans optimization, and honor the opinions of your nervous system. My brain needs that kind of inference time, and I suspect yours does, too.

New section alert—back by popular demand!!

you asked, we listened. bringing back our favorite parts of our original format, with a twist:

what we’re reading/watching:

Danielle: Rudyard Kipling’s “If” and revisiting oldie-but-goodie “How to Do What You Love” by Paul Graham

Céline: Speaking of permanent under/overclasses…Reading Aldous Huxley’s Brave New World in conjunction with watching Pluribus (yes, I know I’m late to the party, on both counts) is kind of a trip!

what we’re listening to:

Céline: Been listening to a lot of Goldie Boutilier lately. And for an IRL experience, she’s playing in SF on March 29!

Danielle: Cobra Man’s lo-fi punk sound and this Chinese internet folk/classical crossover 芒种

what we’re munching on:

Danielle: The new Zaytinya in Palo Alto (go via inKind for $$ off) and a crispy sweet potato omelette that has changed my mornings

Céline: And speaking of my techno-overlords, I caved to the algo and bought Ka’Chava shakes. I’m typically extremely skeptical of “Instagram brands,” but it has good ingredients and tastes yummy. And lord knows my gut/brain axis needs all the help it can get. Recommend the chocolate and strawberry, not so much the matcha.

This week’s poll

🎁 Gift box winner: Kaci!

Kaci found us through LinkedIn (proof that occasionally LinkedIn delivers!) and is currently building Cimu, an at-home tailoring service for New Yorkers who don’t have time to deal with a tailor. Go check it out at shopcimu.com.

pls write back—we reply!

Love,

Céline and Danielle ♡

Written by: Danielle Zhang

Edited by: Céline Vendler

As someone further along in their career, the best advice I can say is just put one foot in front of the other and let providence take care of the rest. Trying to optimize a long-term path in a rapidly changing future is a fool's errand, so your intuition to pursue what gives you energy is wise.

If anything, the advent of AI has crystallized the reality the we're not as in control of our destinies as we thought, and this hits harder for a portion of the population that hasn't typically had to wrestle with that truth.

i love this!! it is this constant need to optimize for long term growth, makes us in more entrenched in decision paralysis than ever. very well put :)